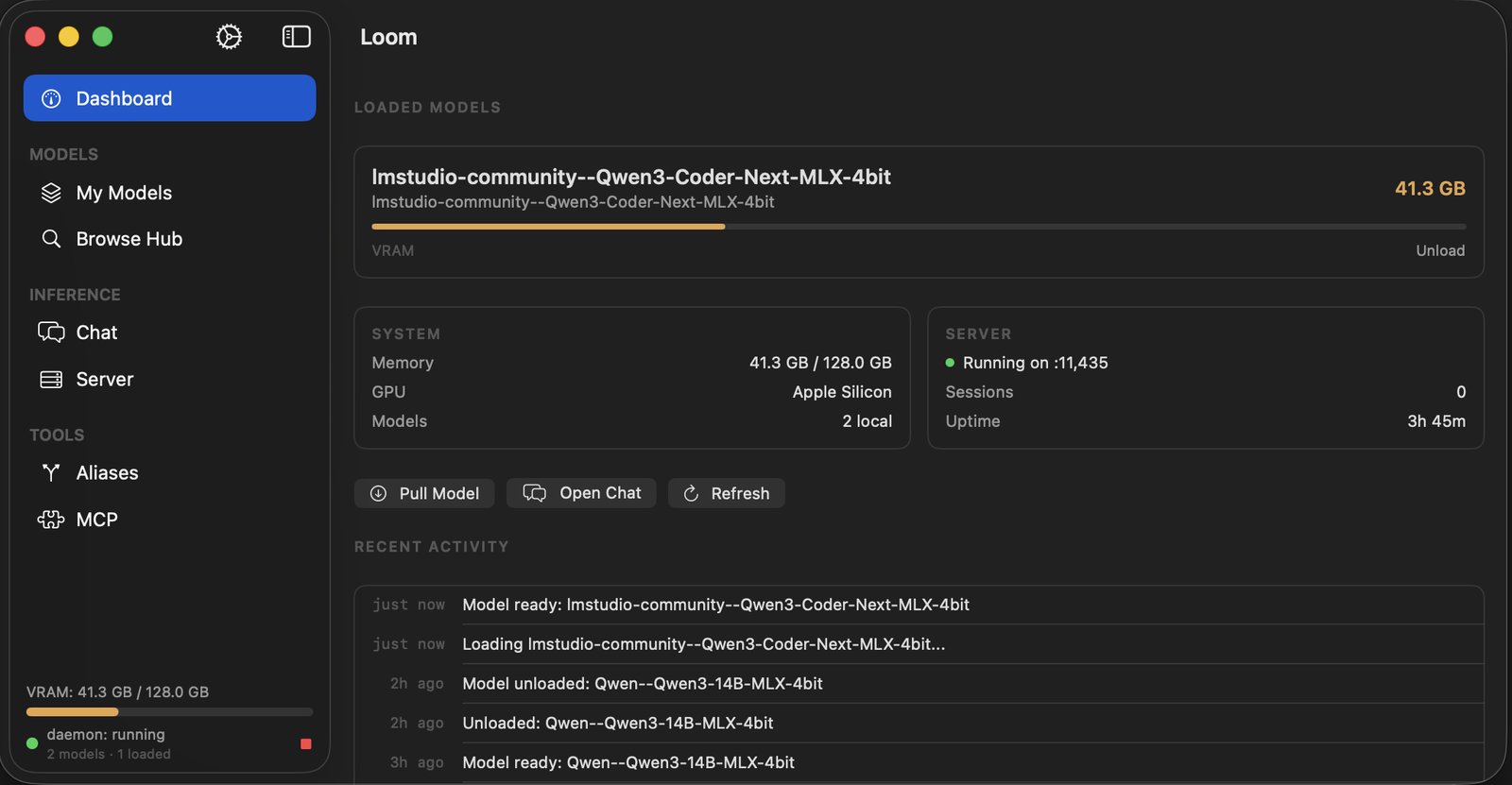

Loom is a native macOS application for running large language models on your own hardware, for those occasions when you would rather not send every idle thought to someone else's data center. Built entirely in Swift on Apple's MLX framework, it ships an OpenAI-compatible API server, a command-line interface, and a SwiftUI desktop app. Download models from HuggingFace, run inference with streaming token generation, generate text embeddings. All on-device, no cloud required, no one reading over your shoulder.

It also includes an MCP server for integration with tools like Claude Desktop and Cursor, which means your local models can participate in your development workflow as though they were invited.